Strengthening SOC Capabilities through AI Integration

Artificial Intelligence (AI) is increasingly recognised as a transformative force in the realm of System and Organisation Controls. Its integration promises to elevate the effectiveness, efficiency, and adaptability of security frameworks, addressing the evolving landscape of digital threats. AI technologies are not only revolutionising how SOC teams operate but are also reshaping the fundamental principles behind security management. By leveraging vast volumes of data, AI can identify subtle patterns and anomalies that would otherwise evade traditional detection mechanisms.

Current Trends:

- Cybersecurity is now a board-level issue. Responsibility sits with senior leadership and boards, not just technical teams.

- AI tools are making advanced attack capabilities accessible to less-skilled actors, not just elite hackers.

- According to recent industry surveys, over 75% of large enterprises in Europe have already integrated some form of AI or machine learning into their SOC operations as of 2025.

- Spending on AI-driven cybersecurity tools reached approximately £12 billion across EMEA in 2025.

- A year-on-year growth rate of 18% (source: IDC, “Worldwide Security Spending Guide,” 2025).

- Faster incident resolution is also evident, as AI-powered SOCs report a reduction in mean time to respond (MTTR) from 12 hours to under 3 hours for critical incidents (source: SANS Institute, “SOC Automation Survey,” 2025), resulting in a more proactive security posture.

Recent developments include the use of AI-driven analytics for real-time monitoring, predictive modelling to anticipate security incidents, and automated triage of alerts to reduce manual intervention. However, the integration of AI within SOC controls is often hindered by fragmented data sources, inconsistent formats, and limited access to high-quality training datasets. Legacy systems pose significant compatibility challenges, requiring careful orchestration to ensure seamless interoperability with modern AI solutions.

Transparency and explainability in AI-driven decisions are critical, as security teams must be able to justify actions and outcomes to stakeholders and regulators. The scarcity of professionals skilled in both cybersecurity and AI further complicates deployment, underscoring the importance of ongoing training and interdisciplinary collaboration. Additionally, organisations must navigate a complex landscape of data protection laws and ethical considerations, balancing innovation with compliance and responsible use.

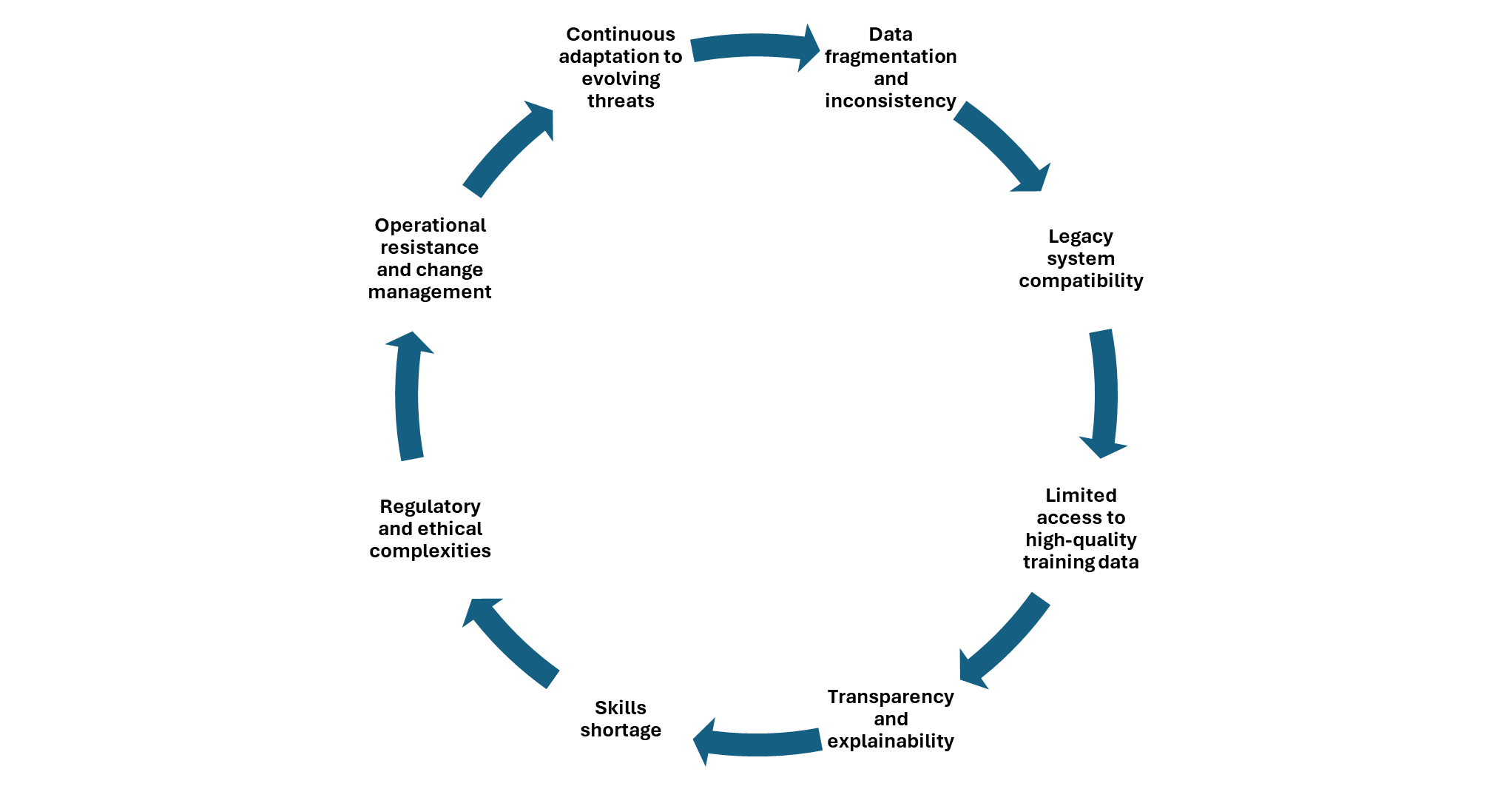

Challenges in AI Adoption for SOC:

Despite its promise, embedding AI into SOC controls presents several obstacles. Key challenges include data quality and availability, the complexity of integrating AI solutions with legacy systems, and the need for transparency in AI decision-making processes.

- Data fragmentation and inconsistency: SOC environments often rely on disparate data sources with varying formats, making it difficult to aggregate, normalise, and analyse information efficiently. This fragmentation can lead to gaps in threat detection and hinder the performance of AI models.

- Legacy system compatibility: Integrating AI solutions with outdated infrastructure can pose significant technical barriers, requiring extensive customisation and orchestration to achieve seamless interoperability without disrupting existing operations.

- Limited access to high-quality training data: The effectiveness of AI in SOC is heavily dependent on the availability of comprehensive, clean, and representative datasets. Scarcity of such data can result in biased models and reduced accuracy in threat detection.

- Transparency and explainability: AI-driven decisions must be interpretable to ensure that security teams can justify actions to stakeholders and regulators. Black-box algorithms may undermine trust and complicate compliance with audit requirements.

- Skills shortage: There is a notable lack of professionals with expertise spanning both cybersecurity and AI, making it challenging to deploy, manage, and optimise AI-enabled SOC controls.

- Regulatory and ethical complexities: Navigating evolving data protection laws and ethical standards is essential to balance innovation with responsible use. Organisations must ensure that AI deployments comply with legal requirements and uphold privacy rights.

- Operational resistance and change management: SOC personnel may be resistant to adopting new technologies, especially if there is a perception that AI could replace human judgement. Effective change management strategies and clear communication are necessary to foster acceptance.

- Continuous adaptation to evolving threats: AI models require ongoing tuning and retraining to remain effective as cyber threats evolve. Failure to update algorithms regularly can result in outdated defences and increased risk exposure.

Organisations also face difficulties in recruiting personnel with both security and AI expertise, as well as ensuring compliance with regulatory requirements and ethical standards.

Industry Best Practices:

Integrating artificial intelligence (AI) into Security Operations Centre (SOC) management is increasingly recognised as essential for advanced defence in today’s cyber threat landscape. Best practices begin with adopting AI-driven threat detection and response tools, which enable faster identification and mitigation of sophisticated attacks. Leveraging machine learning algorithms to analyse vast volumes of security data can help uncover anomalies and patterns that traditional methods might miss.

To ensure effective implementation, organisations should establish clear governance frameworks, including robust policies for data privacy and ethical AI usage. Continuous training of SOC personnel is crucial, focusing on both technical skills and understanding AI outputs to avoid misinterpretation. Automation of repetitive tasks using AI frees up analysts to concentrate on complex investigations, while regular evaluation and tuning of AI models maintain accuracy and relevance.

Collaboration across teams, sharing threat intelligence, and integrating AI solutions with existing security infrastructure further enhance SOC’s defensive capabilities. Finally, maintaining transparency in AI-driven decisions and keeping abreast of evolving industry standards ensures that the SOC remains resilient against emerging threats.

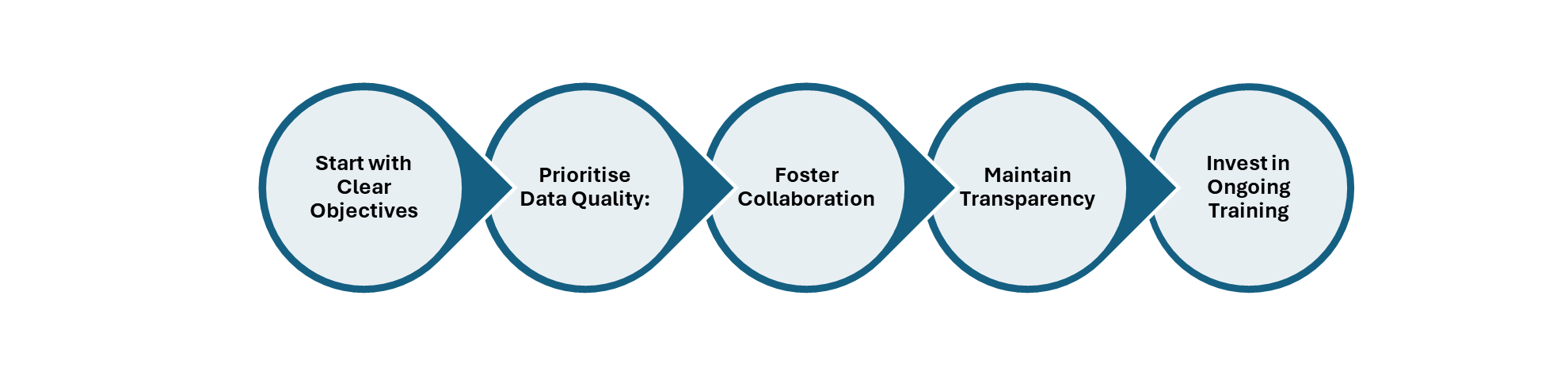

Some of the key best practices to sustain –

- Start with clear objectives: Define measurable goals for AI integration, focusing on specific SOC outcomes such as improved threat detection or reduced response times.

- Prioritise data quality: Ensure that AI systems are trained and operated using comprehensive, accurate, and representative datasets.

- Foster collaboration: Encourage close cooperation between AI specialists, security analysts, and IT teams to maximise value and minimise risks.

- Maintain transparency: Implement mechanisms for explaining AI decisions, supporting auditability and regulatory compliance.

- Invest in ongoing training: Provide continuous professional development to SOC staff on AI concepts, capabilities, and ethical considerations.

- Regularly review and update controls: Periodically assess the effectiveness of AI-enabled SOC controls, adapting to changes in threats and technologies.

In addition to the best practices outlined above, organisations must remain vigilant regarding evolving regulatory expectations when integrating AI into SOC operations. Regulatory bodies increasingly require robust documentation of AI system decision-making processes, data handling protocols, and the ability to demonstrate compliance with data protection standards such as the UK GDPR and similar frameworks. It is essential to conduct regular audits and risk assessments to ensure AI models do not introduce bias, infringe on privacy rights, or compromise security. Furthermore, organisations should stay informed about sector-specific guidance and emerging regulations, adapting their SOC controls to maintain both operational excellence and legal compliance.

As organisations continue to integrate AI into their SOC operations, it is crucial to look ahead and anticipate future trends that will shape the security landscape. The ongoing evolution of AI technologies promises more sophisticated threat detection, greater automation, and enhanced collaboration between human analysts and intelligent systems. Staying proactive by monitoring technological advancements, adapting to regulatory changes, and fostering an agile, learning-oriented culture will enable SOC teams to remain resilient and effective in the face of emerging challenges. By embracing innovation and maintaining a strong ethical foundation, organisations can ensure their security strategies are both robust and future-proof.

Disclaimer :

“The views and opinions expressed by Kavitha in this article are solely her own and do not represent the views of her company or her customers.”

About the author:

Kavitha Srinivasulu – Senior cyber risk and resilience executive with over 22 years of global leadership experience advising Boards and Executive Committees across Financial Services, Healthcare, Retail, Technology, and regulated industries. Delivered and led large-scale, regulator-driven cybersecurity, AI-driven, PCI, and SOC transformations for Tier-1 banks, global healthcare organisations, and highly regulated enterprises operating across the UK, EU, USA, APAC, and ANZ. Trusted advisor to Boards, C-suite, regulators, and global enterprises, consistently delivering resilient, compliant, and scalable cyber operating models.