Vulnerability management has always been a systematic process of identifying, evaluating, treating, and reporting security weaknesses in IT systems. It encompasses regular scanning of systems, rigorous assessment of discovered vulnerabilities, and the implementation of timely remediation measures to mitigate potential risks. Effective vulnerability management not only involves technical solutions but also requires well-defined policies, consistent monitoring, and collaboration across teams to ensure all assets remain protected. In the recent past, Generative AI models are capable of producing new data, content, or insights based on large datasets. The intersection of these fields is transforming how organisations detect, assess, and remediate vulnerabilities, offering innovative approaches to bolster cybersecurity defences.

The rapid evolution of cyber threats has made vulnerability management a cornerstone of modern security strategies. Organisations are constantly challenged to identify, assess, and remediate vulnerabilities before they can be exploited by malicious actors. Traditional approaches, while effective to an extent, often struggle to keep pace with the sheer volume and complexity of emerging threats. Generative AI represents a transformative shift in this landscape, offering the ability to automate analysis, enhance threat prediction, and streamline response processes. By leveraging advanced machine learning techniques, businesses can gain deeper insights into their security posture and proactively address weaknesses, ultimately improving resilience and reducing risk exposure in a dynamic digital environment.

AI is increasingly being integrated into vulnerability management, with generative models used to analyse threat patterns and predict potential weaknesses. Organisations are leveraging automation to improve detection speed and accuracy, reducing manual workload and human error. Emerging technologies such as large language models and advanced neural networks enable real-time analysis of system logs, code, and configurations, helping identify vulnerabilities before they are exploited.

Emerging Trends:

Recent industry surveys highlight a marked shift towards AI-powered vulnerability management solutions to increase the efficiency and reduce the manual efforts. Some of the emerging trends are –

- The adoption of generative AI in vulnerability management has increased by over 45% among large enterprises since 2025, reflecting a rapid shift towards automated risk detection.

- According to a 2025 Cybersecurity Industry report, over 62% of organisations in the UK and Ireland have adopted generative AI tools for threat identification, representing a 20% increase compared to the previous year.

- Automated vulnerability scanning has seen a similar rise, with nearly 70% of security teams now leveraging AI-driven platforms to streamline detection and analysis.

- Recent survey respondents indicated that AI integration has reduced remediation times by an average of 35% in the identified vulnerabilities across the infrastructure, significantly improving operational efficiency and risk mitigation.

- Gen AI-powered tools now identify vulnerabilities up to 60% faster than traditional methods, significantly reducing the average time to patch from weeks to days.

- The integration of gen AI with existing vulnerability management platforms has led to a 25% reduction in false positives, streamlining the workload for IT security professionals.

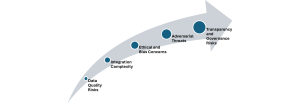

Challenges in integrating Gen AI into Vulnerability Management –

Despite its promise, integrating generative AI into vulnerability management presents several challenges. Data quality and availability remain critical, as AI models rely on comprehensive and accurate datasets to function effectively. Integration with existing security tools and workflows can be complex, requiring robust APIs and interoperability standards. Ethical concerns, including bias in AI outputs and the risk of adversarial attacks, necessitate careful oversight and transparent governance.

Some of the key challenges are:

- Data Quality Risks: Gen AI models depend on vast amounts of reliable data, incomplete or inaccurate datasets can result in missed vulnerabilities or false positives, undermining overall security.

- Integration Complexity: Incorporating AI into existing security workflows often requires significant technical adjustments, risking operational disruptions or incompatibilities if not managed carefully.

- Ethical and Bias Concerns: AI outputs may reflect biases present in training data, potentially prioritising or overlooking certain vulnerabilities, which can lead to unfair or ineffective risk mitigation.

- Adversarial Threats: AI systems themselves can be targeted by adversarial attacks, where malicious actors manipulate input data to deceive detection algorithms & evade security controls.

- Transparency and Governance Risks: Lack of clear oversight and explainability in AI decision-making processes may hinder accountability and compliance with regulatory standards.

Implementation Models for Gen AI-Driven Vulnerability Management –

Gen AI-driven vulnerability management involves leveraging generative artificial intelligence to identify, prioritise, and remediate security vulnerabilities. Implementation models typically include automated threat detection, predictive risk analysis, and adaptive response frameworks.

By integrating Gen AI with existing security tools with clear designing and defining objectives, organisations can benefit from faster vulnerability discovery, contextual risk assessments, and intelligent automation of mitigation actions, ultimately enhancing their overall cyber defence posture. Some of the recommended models are –

- Gen AI-driven Vulnerability Scanning: Generative AI models can automate vulnerability scanning, rapidly identifying potential threats across networks and endpoints.

- Automated Remediation: AI-powered systems can suggest or execute remediation actions, reducing response times and minimising risk exposure.

- Risk Prioritisation: Advanced AI algorithms assess vulnerabilities based on potential impact and likelihood, enabling security teams to focus on critical issues first.

Industry Best Practices –

Gen AI can significantly strengthen vulnerability management by automating threat detection, prioritisation, and remediation. Industry best practices recommend integrating AI-driven analytics to rapidly identify vulnerabilities, leveraging machine learning models to predict exploitation likelihood, and automating patch management processes. Continuous monitoring and feedback loops ensure the AI adapts to evolving threats, whilst maintaining robust data privacy and ethical standards. Collaboration between security teams and AI experts is essential for effective deployment and ongoing optimisation. Some of the key best practices:

- Robust Data Management: Implement rigorous data validation and cleansing processes to ensure AI models are trained on high-quality, representative datasets, thereby minimising the risk of inaccurate or biased outputs.

- Security by Design: Incorporate security considerations throughout the AI system development lifecycle, including regular threat modelling, vulnerability assessments, and secure coding practices.

- Regular Auditing and Monitoring: Continuously monitor AI-driven tools and processes for anomalies and conduct periodic audits to ensure compliance with security and privacy requirements.

- User Training and Awareness: Educate staff and stakeholders on the capabilities, limitations, and risks of AI in vulnerability management to promote responsible usage and informed decision-making.

- Incident Response Planning: Develop and regularly test incident response plans that address AI-specific threats, ensuring rapid containment and recovery in the event of compromise.

- Governance: Establish clear AI governance frameworks to ensure accountability, transparency, and compliance with regulatory standards.

- Continuous Improvement: Regularly update AI models and security processes to adapt to evolving threat landscapes and emerging vulnerabilities.

Gen AI is reshaping vulnerability management by offering advanced detection, analysis, and remediation capabilities. As adoption grows, organisations must address challenges related to data, integration, and ethics, while embracing best practices for governance and collaboration.

Disclaimer :

“The views and opinions expressed by Kavitha in this article are solely her own and do not represent the views of her company or her customers.”

About the author:

Kavitha Srinivasulu – Senior cyber risk and resilience executive with over 22 years of global leadership experience advising Boards and Executive Committees across Financial Services, Healthcare, Retail, Technology, and regulated industries. Delivered and led large-scale, regulator-driven cybersecurity, AI-driven, PCI, and SOC transformations for Tier-1 banks, global healthcare organisations, and highly regulated enterprises operating across the UK, EU, USA, APAC, and ANZ. Trusted advisor to Boards, C-suite, regulators, and global enterprises, consistently delivering resilient, compliant, and scalable cyber operating models.