Artificial intelligence continues to evolve rapidly, with new models and technological advancements constantly reshaping digital landscape. While these innovations drive substantial growth and attract considerable investment across various industries, they also introduce complex threats that are not easily mitigated. The rise of AI and, more recently, generative AI has brought both remarkable opportunities and significant challenges to organizations worldwide. These technologies have empowered cybercriminals to devise more sophisticated phishing and social engineering attacks, making it increasingly difficult to safeguard sensitive information. It’s time for the organisations to counteract these AI-driven threats, offering practical solutions for navigating risks inherent in our swiftly expanding digital environment.

Why GenAI Changes the Game?

Generative AI reduces the cost and effort required to produce persuasive content at scale. In cybersecurity discussions, GenAI is often highlighted because it can automate language-heavy tasks, personalise messaging, and accelerate both attacker and defender workflows (e.g., detection, triage, and response). Internal write-ups also note that malicious actors can use AI to create convincing phishing by emulating writing styles and behaviour patterns, and to automate parts of the attack lifecycle

- Hyper-personalisation: attackers can tailor tone, context, urgency using public information and stolen data.

- Higher linguistic quality: fewer spelling/grammar cues that users traditionally relied on to spot scams.

- Faster variation testing: rapid A/B testing of subject lines, pretexts, and call-to-actions.

- Multi-channel social engineering: consistent narratives across email, chat, SMS, and (in advanced cases) voice/video impersonation.

- Automation: scaled reconnaissance, message creation, and post-click engagement (e.g., chatbot-driven credential harvest).

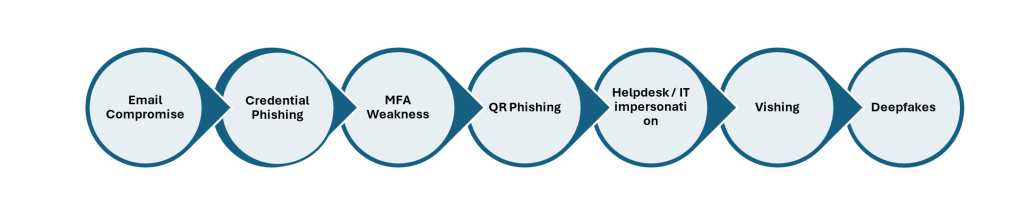

Common GenAI-Enabled Phishing & Social Engineering Patterns:

- Email Compromise: GenAI helps craft plausible payment change requests, vendor impersonation, and thread hijacking follow-ups.

- Credential Phishing: links to realistic login pages; content is adapted to the target’s role, project names, and tooling.

- MFA weakness: social pressure + repeated prompts to coerce approval.

- QR phishing: QR codes to bypass URL scanning and shift interaction to mobile.

- Helpdesk / IT impersonation: “account locked” / “security verification” narratives to obtain OTPs, reset approvals, or remote access.

- Vishing: victim is instructed to call a number; conversational scripts can be AI-assisted.

- Deepfakes: in advanced scenarios, voice/video impersonation increases credibility and urgency.

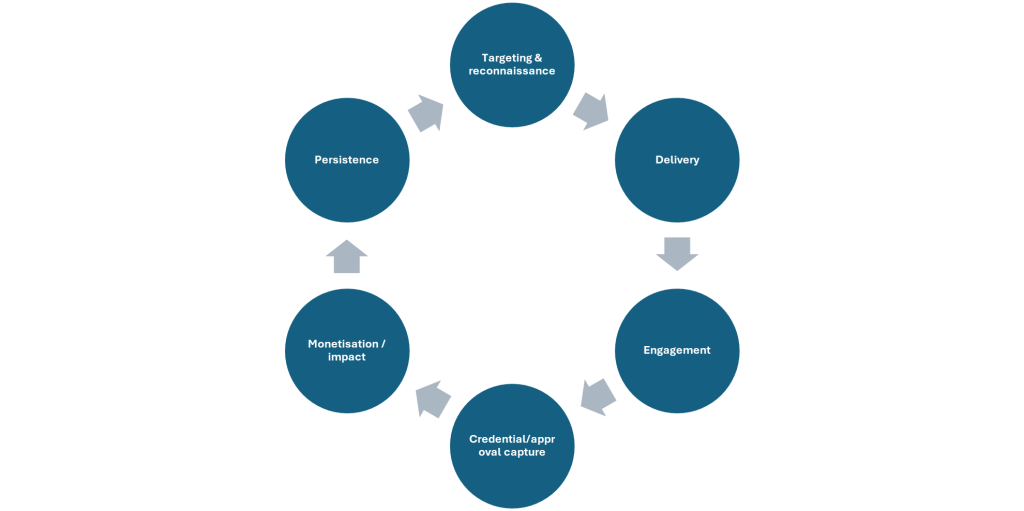

Where to Break the Chain?

Understanding the attack lifecycle is crucial, especially as AI-driven phishing and social engineering tactics become more sophisticated. To effectively disrupt these threats, it’s essential to identify the most vulnerable stages where intervention can have the greatest impact. By proactively monitoring for early signs of AI-generated phishing attempts—such as suspicious emails or messages that use advanced language models to mimic trusted contacts—we can thwart attacks before they escalate.

Additionally, implementing advanced authentication mechanisms and continuous employee awareness training serves as a robust line of defence, particularly during the initial reconnaissance and delivery phases. Focusing on these critical points in the lifecycle not only helps break the chain of attack but also significantly reduces the risk of successful compromise. Let’s work together to pinpoint and strengthen these intervention opportunities to enhance our organization’s resilience against evolving AI-based threats.

Lifecycle of an Attack:

- Targeting & reconnaissance: attacker gathers org charts, supplier info, and current initiatives. Defence: reduce publicly exposed details; monitor domain/brand abuse.

- Delivery: email, SMS, collaboration tools, social media, or voice. Defence: secure email gateway + DMARC/SPF/DKIM + attachment/URL controls.

- Engagement: victim reads, clicks, scans QR, or replies. Defence: just-in-time warnings, banners, and user coaching.

- Credential/approval capture: passwords, MFA approvals, tokens, or helpdesk resets. Defence: phishing-resistant MFA, conditional access, and strong identity governance.

- Monetisation / impact: fraud payment, account takeover, data exfiltration, malware/ransomware. Defence: transaction controls, anomaly detection, EDR/XDR, rapid containment.

- Persistence: mailbox rules, OAuth app consent abuse, forwarding rules. Defence: posture management, OAuth governance, mailbox audit rules, continuous monitoring.

Detecting GenAI Phishing:

AI can improve phishing detection by analysing large volumes of signals quickly, identifying anomalies, and prioritising response. Internal guidance highlights GenAI’s strengths in behavioural analytics, threat detection and response, and automated responses—while also noting challenges such as bias, explainability, and adversarial manipulation (ref: Using Gen AI in Cybersecurity; Implications of AI in Cybersecurity).

- Content and intent signals: NLP models classify suspicious intent (credential harvest, payment change, urgency, secrecy) rather than relying on spelling errors.

- Sender and authentication signals: combine DMARC alignment, sender reputation, and lookalike-domain similarity scoring.

- URL and attachment risk scoring: use ML features from link structure, redirect behaviour, and sandbox detonations.

- Conversation and relationship context: models flag anomalies such as unusual sender-recipient pairings, thread hijacks, and abnormal language for that relationship.

- User and entity behaviour analytics (UEBA): detect suspicious login patterns, token use, and mailbox rule creation that often follow successful phishing.

- Ensemble decisioning: combine multiple weak signals to reduce false positives and improve precision for SOC workflows.

- Risk-based segmentation: tailor coaching by role (e.g., finance) and by exposure (frequent external email, supplier interactions).

- Just-in-time nudges: when a user clicks a suspicious link or receives a high-risk message, provide a short, specific explanation and next steps.

- Adaptive simulations: phishing simulations that adapt to evolving lures and focus on behaviours (verify, report, slow down), not “gotcha” testing.

- Positive reinforcement: reward timely reporting and safe actions to increase reporting rates.

- Privacy and ethics: limit monitoring to what is necessary; be transparent about what is measured; avoid punitive use of individual scoring.

Clear reporting steps increase early detection and help the security team contain campaigns quickly. The following user-friendly steps are taken from internal guidance on reporting phishing across common platforms (ref: How_to_Report_Phishing_on_various_platforms.pdf).

- Desktop Outlook (Windows/Mac): select the suspicious email (avoid opening), click Report in the ribbon, then choose Report Phishing.

- Outlook Web (OWA): open the email, click the three dots (More actions), then select Report > Report Phishing.

- Outlook mobile (iOS/Android): open the email, tap the three vertical dots (top-right), then select Report Phishing.

- Gmail (browser): open the email, click the three dots next to Reply, then choose Report Phishing.

- Gmail mobile (iOS/Android): open the email, tap the three dots (top-right) and select the relevant reporting option (e.g., report phishing/spam depending on the client option).

How to measure the KPI and reduce AI Phishing and Social Engineering Attacks?

- User behaviour: phishing simulation click rate, data entry rate, and reporting rate (often the most important improvement metric).

- Detection quality: precision/recall for phishing classification, false-positive rate, and time-to-verdict.

- Response speed: mean time to contain (MTTC) and mean time to respond (MTTR) for phishing campaigns.

- Identity compromise: number of prevented account takeovers, risky mailbox rules blocked, suspicious OAuth grants detected.

- Financial impact: fraud loss avoided (payments), recovery rate, and reduced investigation cost per incident.

- Operational efficiency: analyst time saved via triage automation and improved prioritisation.

Defence against GenAI phishing requires a layered strategy: hardening identity and messaging channels, applying AI-driven detection across content and behaviour signals, and systematically improving human decision-making through targeted coaching and low-friction reporting. With the right governance (explainability, privacy, and adversarial testing), organisations can use AI to reduce social engineering risk while improving response speed and measurable business outcomes.

Disclaimer :

“The views and opinions expressed by Kavitha in this article are solely her own and do not represent the views of her company or her customers.”

Name – Kavitha Srinivasulu

Company – TCS

Designation – Program Director–Cyber Security & Data Privacy

About the Author-

Senior cyber risk and resilience executive with over 22 years of global leadership experience advising Boards and Executive Committees across Financial Services, Healthcare, Retail, Technology, and regulated industries. Delivered and led large-scale, regulator-driven cybersecurity, AI driven, PCI, and SOC transformations for Tier-1 banks, global healthcare organisations, and highly regulated enterprises operating across the UK, EU, USA, APAC, and ANZ. Trusted advisor to Boards, C-suite, regulators, and global enterprises, consistently delivering resilient, compliant, and scalable cyber operating models.