Artificial Intelligence (AI) is transforming the cybersecurity landscape at an unprecedented speed, significantly enhancing the security measures while simultaneously evolving more sophisticated threats. Organizations are increasingly incorporating AI into their cybersecurity frameworks to enhance the detection, prevention, and response to threats with greater efficiency and precision. This integration of AI brings numerous opportunities, such as the ability to analyse vast amounts of data in real-time and identify patterns that may indicate potential security breaches.

AI can automate routine tasks, freeing up human resources to focus on more complex and strategic issues. However, along with these benefits come unique challenges that necessitate careful consideration. These challenges include ensuring the accuracy of AI algorithms, addressing potential biases, and safeguarding against adversarial attacks that could exploit AI systems. To fully leverage the advantages of AI in cybersecurity, organizations must adopt a holistic approach that balances innovation with robust risk management strategies.

Facts and Industry Insights:

- The World Economic Forum (2026) identified adversarial AI as one of the top emerging cyber threats.

- The UK National Cyber Security Centre (NCSC) recommends regular model audits and use of explainable AI to maintain trust in automated security systems.

- Gartner forecasts that by 2026, 60% of organisations will rely on AI-driven security operations centres for real-time threat intelligence and automated incident response.

- The Information Commissioner’s Office (ICO) has emphasised the importance of robust data governance when deploying AI, noting that organisations must ensure proper anonymisation and minimisation of personal data to remain compliant with GDPR.

- The Financial Conduct Authority (FCA) has issued guidance emphasising the need for transparent AI algorithms to ensure compliance with anti-money laundering (AML) regulations.

- The Insurance Europe 2026 report highlights that insurers are leveraging AI for real-time risk assessment and claims fraud detection, dramatically improving incident response times and reducing losses due to fraudulent activities.

- Regulatory bodies such as the European Banking Authority (EBA) are moving towards establishing sector-specific governance frameworks for AI, reinforcing the importance of data privacy, model accountability, and incident reporting in the BFSI domain.

These industry insights point to a landscape where AI is indispensable for cybersecurity, but also one where organisations must proactively address new and evolving risks.

Artificial Intelligence (AI) is rapidly transforming the cybersecurity landscape. Organisations are increasingly integrating AI into their cybersecurity frameworks to detect, prevent, and respond to threats more efficiently. However, embedding AI brings both opportunities and unique challenges that must be carefully considered to maximise its benefits.

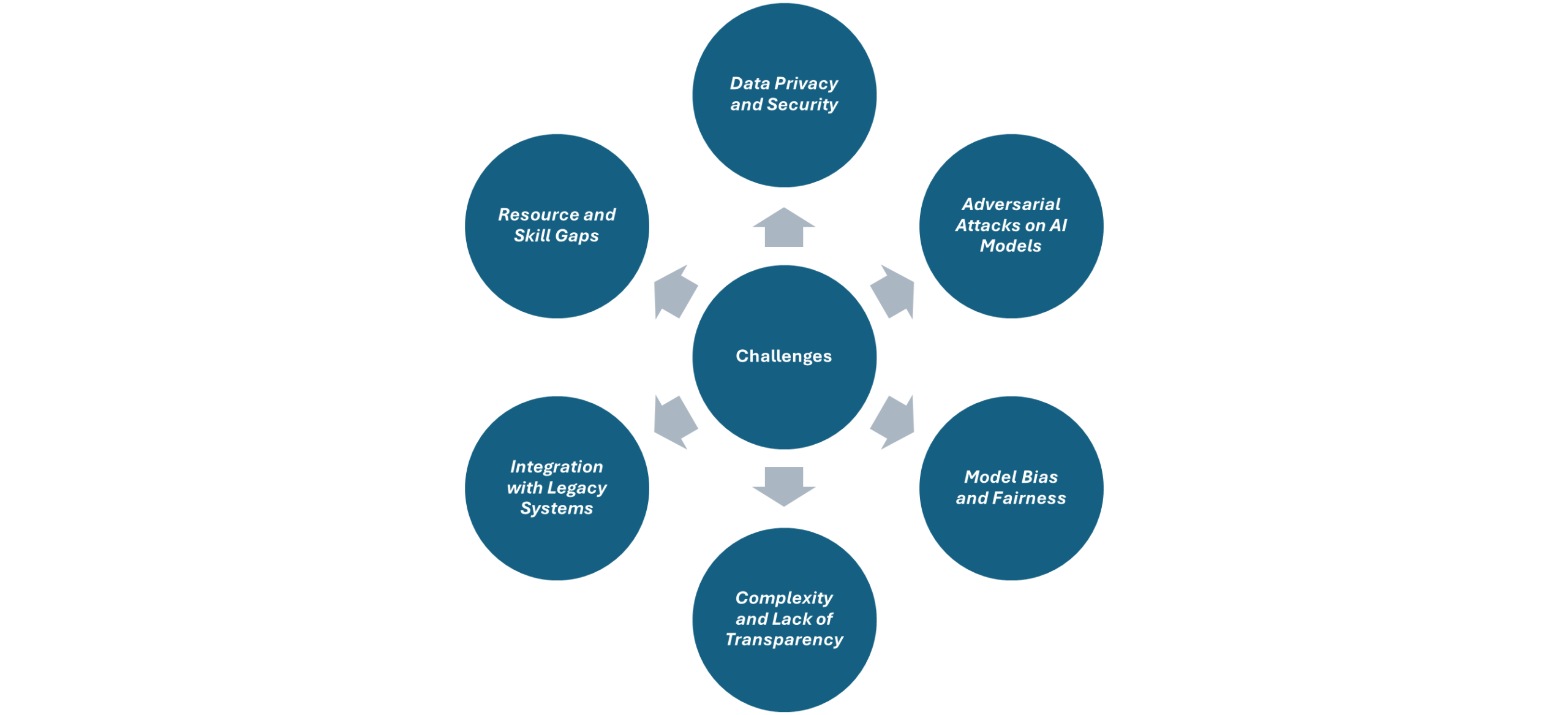

Key Cybersecurity Challenges in Embedding AI:

- Data Privacy and Security – AI models require vast volumes of data for training and operation. Protecting this sensitive data from breaches or misuse is paramount. Exposure of training data can lead to privacy violations, regulatory fines, and reputational damage.

- Adversarial Attacks on AI Models – Cybercriminals can exploit vulnerabilities in AI systems through adversarial attacks, such as manipulating input data to deceive AI detectors or classifiers. This can result in malicious activities going undetected.

- Model Bias and Fairness – AI systems can inherit biases from historical data, leading to unfair or inaccurate threat detection. Biased models may overlook certain attack patterns or disproportionately flag benign activities.

- Complexity and Lack of Transparency – Many AI models, especially deep learning algorithms, function as “black boxes” with limited interpretability. This makes it difficult for security teams to understand, audit, and trust AI-driven decisions.

- Integration with Legacy Systems – Organisations often struggle to integrate advanced AI solutions with existing legacy infrastructure. Compatibility issues can lead to operational disruptions or create new attack surfaces.

- Resource and Skill Gaps – Implementing and managing AI-based cybersecurity controls requires specialised skills that are in short supply. This talent gap can delay adoption or result in misconfigured systems.

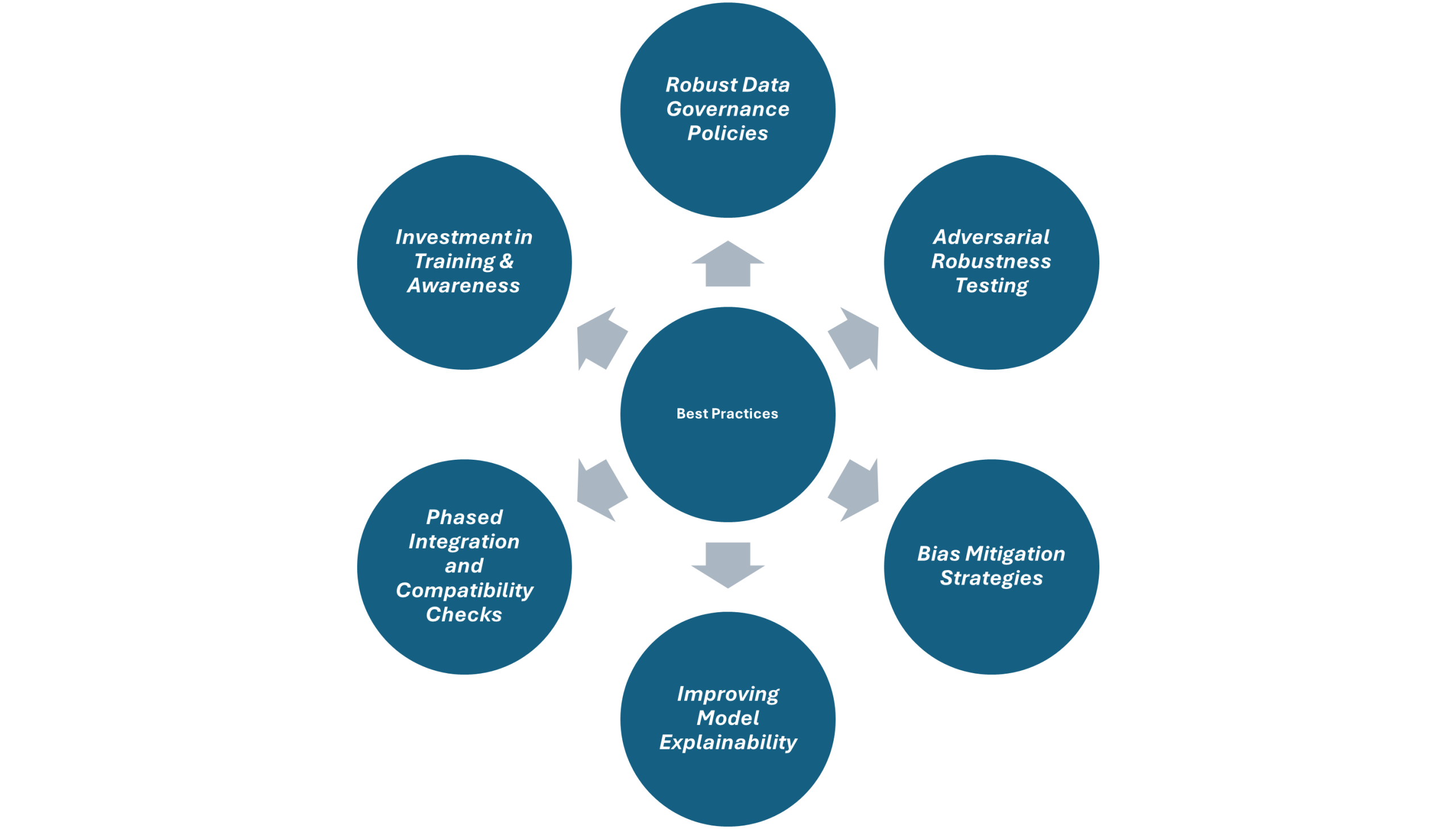

Effective Ways to Overcome the Challenges:

- Robust Data Governance Policies – Implement comprehensive data governance frameworks to ensure data used for AI is anonymised, encrypted, and complies with regulations such as GDPR. Regular audits and access controls are essential.

- Adversarial Robustness Testing – Routinely test AI models against adversarial scenarios to identify vulnerabilities. Employ techniques such as adversarial training and model hardening to enhance resilience.

- Bias Mitigation Strategies – Use diverse and representative datasets and employ bias detection tools to identify and correct unfair patterns in model outputs. Continuous monitoring is necessary to maintain fairness.

- Improving Model Explainability – Adopt explainable AI (XAI) techniques to make AI decisions more transparent. Tools such as LIME or SHAP can help security teams interpret and trust AI-driven actions.

- Phased Integration and Compatibility Checks – Integrate AI solutions incrementally, ensuring compatibility through rigorous testing. Use API-based architectures and middleware to bridge legacy systems and modern AI controls.

- Investment in Training & Awareness – Upskill existing staff and recruit AI and cybersecurity experts. Foster collaboration between IT, security, and data science teams to ensure effective deployment and management.

Embedding AI in organisational cybersecurity controls offers significant improvements in threat detection, speed, and adaptability. However, it introduces challenges such as data privacy risks, adversarial threats, bias, and integration complexities. Organisations must adopt robust governance, invest in adversarial robustness, ensure fairness and transparency, and bridge skill gaps to fully realise AI’s potential in cybersecurity. A proactive, well-governed approach will enable organisations to harness AI’s strengths while mitigating associated risks, thus strengthening overall cyber resilience.

Author: Kavitha Srinivasulu

TCS – Program Director, Cyber Security & Data Privacy

About the Author:

Senior cyber risk and resilience executive with over 22 years of global leadership experience advising Boards and Executive Committees across Financial Services, Healthcare, Retail, Technology, and regulated industries. Delivered and led large-scale, regulator-driven cybersecurity, AI driven, PCI, and SOC transformations for Tier-1 banks, global healthcare organisations, and highly regulated enterprises operating across the UK, EU, USA, APAC, and ANZ. Trusted advisor to Boards, C-suite, regulators, and global enterprises, consistently delivering resilient, compliant, and scalable cyber operating models.